Research

Enterprise AI

AI Strategy

Set up governance and risk correctly

Article by

neuland AI

·

Governance is not a brake pad – but an enabler

Anyone introducing generative AI faces a paradoxical requirement: on the one hand, moving quickly enough to realise competitive advantages. On the other hand, proceeding in a sufficiently controlled way so as not to underestimate risks. The solution lies not in less governance, but in better governance.

A robust governance framework for AI begins with one key insight: generative AI is not traditional software. It operates probabilistically, its results are not fully reproducible, and the risks range from hallucinations and data protection breaches to ethical bias.

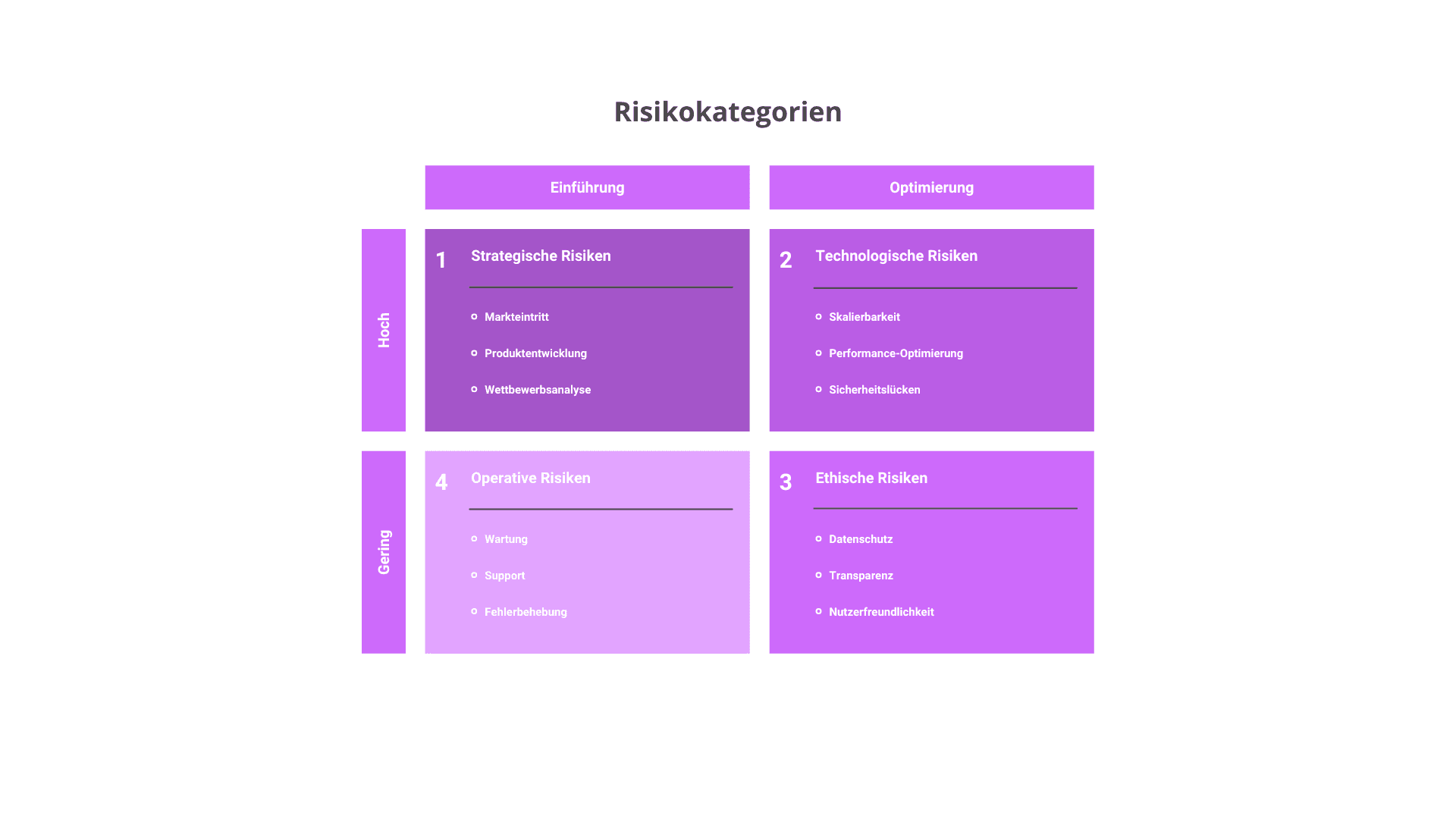

Three risk categories every company must know

Strategic, financial and regulatory risks concern dependence on third-party providers, data protection breaches and compliance with regulatory requirements. Delayed integration can lead to competitive disadvantages, while erroneous results can lead to wrong decisions.

Ethical risks relate to bias and discrimination. Generative systems can reinforce unconscious prejudices – through unbalanced data or distortions in the model used itself. Transparency and accountability are key issues here.

Technological and model-specific risks include hallucinations, lack of transparency, security vulnerabilities and insufficient robustness. In multi-agent systems, these risks are further amplified, as individual agents act autonomously and uncontrolled API calls or data access can become possible.

The risk management cycle for AI

Effective risk management for AI follows a structured cycle: risk identification after defining objectives and analysing the GenAI inventory. Risk assessment of the identified risks in terms of likelihood of occurrence and impact. Risk control through targeted measures – from technical adjustments and security measures to ethical reviews. And finally: implementation, monitoring and improvement – because AI risks are dynamic and require continuous attention.

Internal control system: seven criteria as guardrails

For establishing an internal control system (ICS), seven criteria have proven effective: the primacy of human action and human oversight, technical robustness and security, data protection and governance, transparency and explainability, diversity and non-discrimination, social and environmental well-being, and accountability.

These criteria form the basis for specific controls: from human review of generated outputs and mechanisms for bias detection to clear escalation paths in the event of undesirable system behaviour.

Conclusion

Governance for generative AI is not a one-off setup, but a living process. Those who create the right structures early can scale faster – because trust in the technology is well-founded. The next part will look at how use cases are systematically identified, evaluated and prioritised.